The use of Grok, X’s artificial intelligence assistant, has multiplied among users of this social network, who use it, in many cases, as a source of information and to verify content despite its limitation to contrast facts and the high error rate in their answers, according to experts and studies.

An example of this is the case of a user that has gone viral in recent days, who shared the image of a family in a substandard housing to denounce the conditions of some Spaniards in the Franco regime.

Grok: Why is it so unreliable?

Another user took his message and asked Grok for information about the image, to which the artificial intelligence replied that it showed a family of sharecroppers in Alabama, in the United States, and therefore was not taken during the Franco regime.

Grok’s response provoked a string of insults and disqualifications against the user who placed the image in Spain.

This despite the fact that a simple Google Lens search shows that the photograph was indeed taken in a Malaga home in 1953, as revealed by a historian in an X thread.

This user asked Grok to revise her answers after giving her the original sources of the photograph.

However, the tool reaffirmed its error, attributing the image to the United States.

After several attempts, it was not until the user compared in the same tweet the image of Franco’s Spain with the one Grok had identified -visibly different-, that the X AI recognized its mistake.

Although it took more than two hours to do so and that post barely had any views, because of the millions of views the one that provoked the controversy with a false piece of information had.

” AI-based chatbots seek to generate convincing, well-structured text, and whether it is true or true doesn’t matter,” Javi Cantón, professor and researcher at the International University of La Rioja, and expert in AI and misinformation, tells EFE Verifica.

Cantón points out that these tools do not know how to “contrast facts in real time or apply journalistic verification criteria”.

“They don’t understand what they claim. In short, they generate linguistic plausibility, not factual truth,” he stresses.

The limitations of these tools are compounded by “ideological biases and the elimination of unethical limits that have been included in their programming,” he adds.

According to a Business Insider investigation, Grok was trained with “prompts” (instructions given to an AI system to guide its response) aimed at turning this tool into an alternative to what Elon Musk considers “chatbots wokes,” such as ChatGPT.

Former employees of xAI, Musk’s company, told Business Insider that, in their opinion, the methods for training Grok “seemed to place a high priority on right-wing beliefs.”

High error rate in your answers

Research published by the Columbia Journalism Review evaluated the ability of eight artificial intelligence tools to access, present and cite the news they used as a source.

The study concluded that these chatbots generally “did not know how to reject questions they could not answer accurately, and instead provided incorrect or speculative answers.”

In fact, X’s artificial intelligence answered 94% of the questions posed to it by the researchers incorrectly.

On its website, Musk’s company warns that because “Grok has been trained on publicly available information,” his responses may sometimes include “misleading or inaccurate information.”

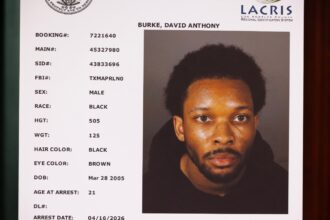

A few weeks ago, the newspaper El País published a photograph of the writer and academic Antonio Muñoz Molina to illustrate an interview.

A user asked Grok the brand and price of the watch Molina was wearing in the picture, to which the artificial intelligence replied: “The watch worn by Antonio Muñoz Molina in the picture is a Rolex Datejust 36 mm with white dial, fluted bezel and Jubilee bracelet. Approximate price: 10.000-15.000 €”.

In reality, the watch was a Festina, but, as happened with the old photograph of Malaga, the query to Grok -now deleted, but which accumulated almost 700,000 views- originated an avalanche of insults against the writer.

These tools are designed “to make us right and flatter us,” so “we cannot trust 100% of what they tell us,” warns Javier Cantón.

That is why, he explains, the use of chatbots to verify content can “contribute to reinforcing echo chambers.”

Los chatbots basados en IA buscan generar un texto convincente, bien estructurado, y que sea cierto o verdadero no importa

Javi Cantón